Some links on this page are affiliate links. We may earn a commission when you click through and make a purchase, at no additional cost to you.

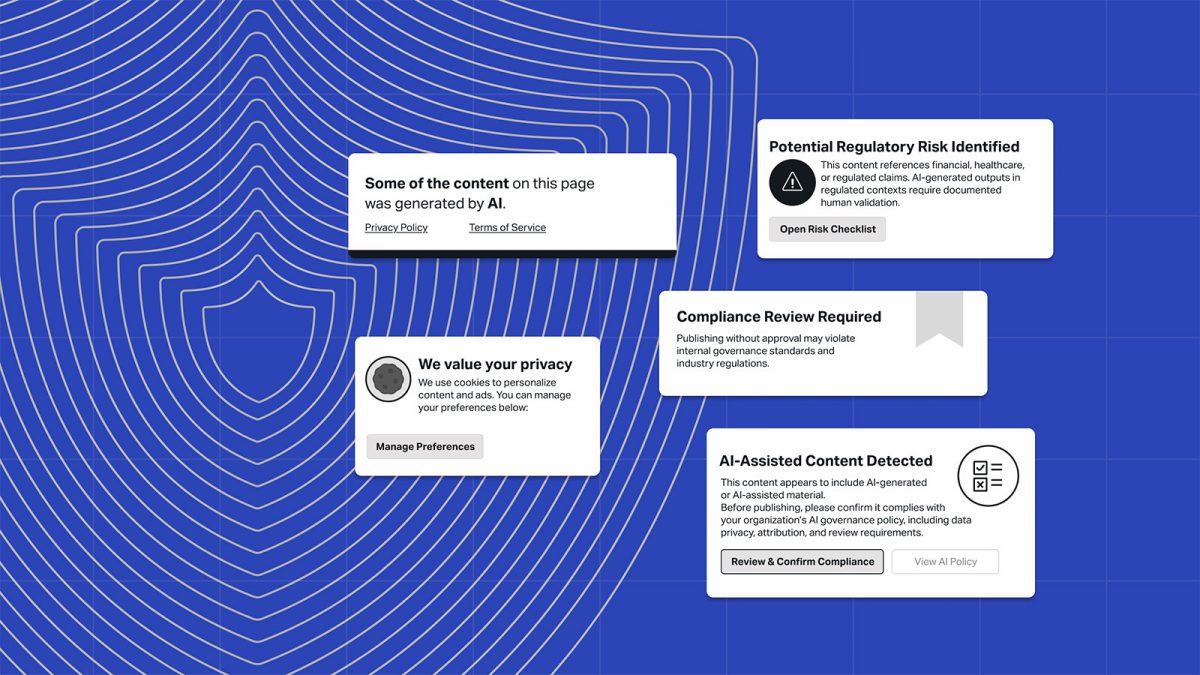

A seismic shift is underway in how enterprises approach AI-generated content. With 72% of U.S. companies now identifying AI as a material risk in their public disclosures—up from just 12% two years ago—the urgency for robust governance frameworks has never been clearer.

As highlighted by [WordPress VIP](https://wpvip.com), the rapid evolution of AI technology has outpaced compliance mechanisms, leaving organizations exposed to risks ranging from legal disputes to reputational damage. Tim Ahlenius, Vice-President of Strategic Initiatives at Americaneagle.com, emphasizes that these risks are no longer theoretical. “They show up in conversations during sales and client engagements regularly,” he notes.

Legal and IP Risks in AI Content

The legal landscape surrounding AI-generated content remains ambiguous. Questions about copyright ownership, training data security, and inadvertent reproduction of protected material are top concerns. Large language models (LLMs) used by AI tools often pull from publicly available online data, raising issues about intellectual property rights.

Ahlenius explains, “Brands don’t want to be the test case for unresolved case law. Industries where intellectual property is central, such as media and technology, are particularly cautious. The uncertainty isn’t necessarily stopping innovation, but it is slowing deployment in regulated environments.” Without proper governance, businesses risk lawsuits and financial penalties stemming from copyright violations.

Regulatory and Compliance Challenges

For industries like healthcare, finance, and higher education, compliance demands are particularly stringent. AI-generated content must align with regulations such as GDPR, FDA requirements, SEC rules, and WCAG accessibility standards.

Ahlenius remarks, “AI-generated content can’t just be ‘mostly right.’ It has to meet strict regulatory criteria, especially in industries with significant oversight. Concerns include claims accuracy, required disclaimers, accessibility compliance, and data privacy risks tied to sensitive prompts.” As global AI governance standards emerge, businesses face mounting pressure to ensure their AI tools meet legal requirements across multiple regions.

Brand and Reputational Risks

The risks posed by AI extend beyond compliance and legal issues. Improper use of AI can erode trust in a brand. Hallucinated facts, biased content, and tone misalignment are just some of the dangers enterprises face when scaling AI-driven operations.

“When AI generates authoritative-sounding but inaccurate content, the damage can be immediate,” Ahlenius warns. Without governance, businesses risk scaling inconsistency and misinformation just as quickly as they scale productivity. Companies must ensure AI tools adhere to established voice and tone guidelines to protect their brand identity.

Operational Risks Require Oversight

Even when businesses officially authorize AI tools, employees can still turn to unvetted platforms. This introduces operational risks, including compromised data security and inconsistent practices. Governance frameworks must address these vulnerabilities to safeguard organizational integrity.

Ahlenius points out, “The question isn’t whether AI is allowed—it’s what happens when something wrong gets published. Operational oversight is critical to prevent these scenarios from escalating.”

What To Do

- For Developers: Ensure AI models and tools comply with regulatory standards in relevant industries, including GDPR, WCAG, and FDA requirements.

- For Agency Owners: Advise clients on the importance of developing governance frameworks for AI-generated content to mitigate brand and reputational risks.

- For Enterprise Site Operators: Convene internal stakeholders to audit current AI usage and address risks in legal, compliance, operational, and reputational domains.

- For Hosting Professionals: Collaborate with enterprise clients to ensure hosting solutions align with privacy laws and support AI compliance needs.